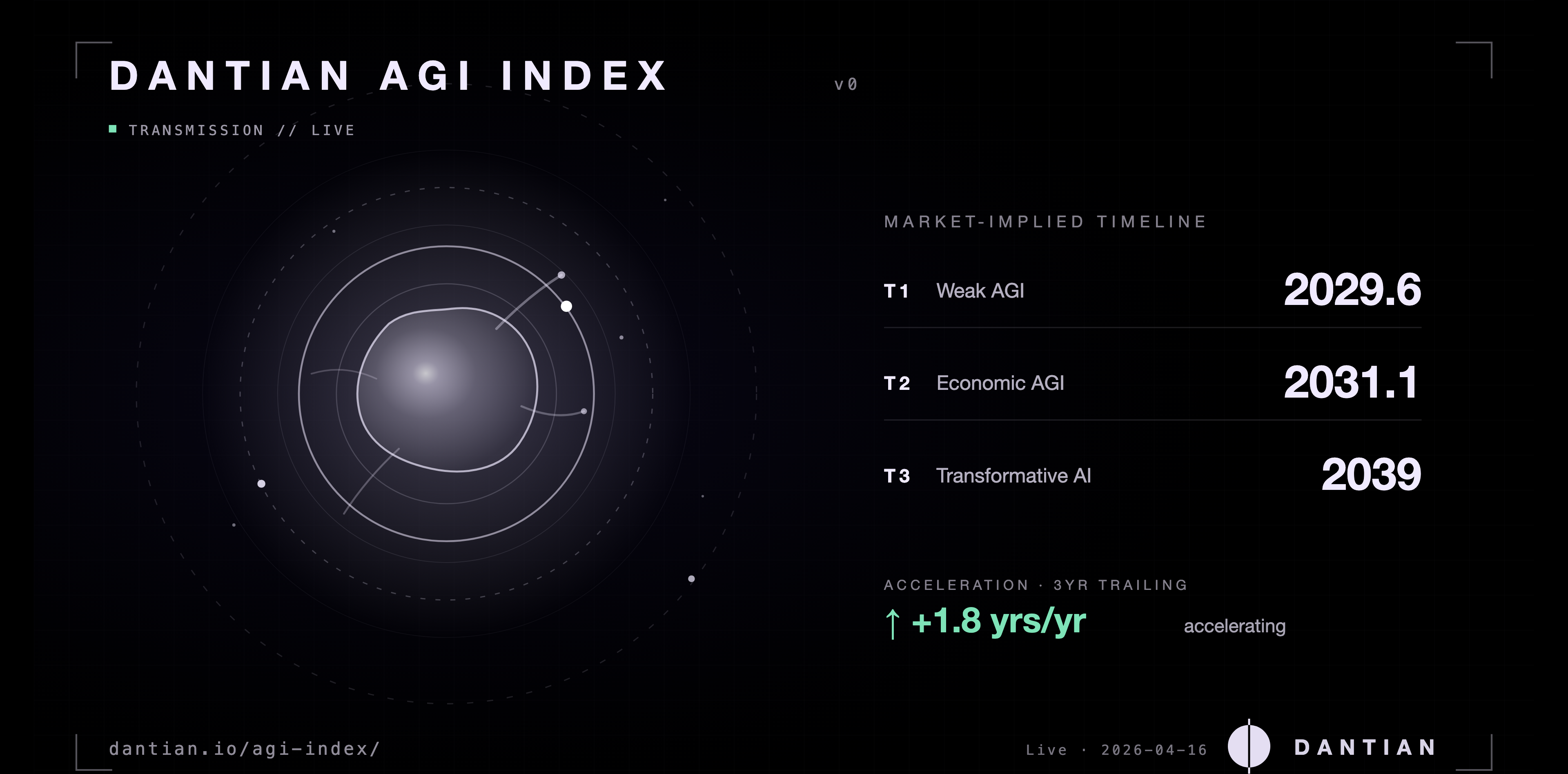

A daily, market-implied estimate of when AGI arrives, reported across three operational tiers (Weak, Economic, Transformative). Pools Metaculus community predictions, Manifold markets, and Kalshi real-money contracts into a single citable year per tier, with a live capability gauge from five public leaderboards (ARC-AGI-2, MMLU-Pro, GPQA Diamond, SWE-bench Verified, LMArena Elo). We do not forecast AGI. We measure what the world currently implies.

Liquidity-weighted composite across Metaculus, Manifold, and Kalshi. Anchored on the Metaculus definition of weak AGI (adversarial Turing test + knowledge benchmarks + physical assembly). The three rings are the three tiers. The blob (AI) grows toward T1 as real time elapses.

Recent shift The Metaculus weak-AGI median pulled in from roughly 2042 in early 2022 to 2029.6 today, the single largest reference move on AI timelines since tracking began. Manifold's AGI When? market is holding near 2030.5.

Composite breakdown

Five signals feed the index.

What's feeding the index today

Live sources & weights.

| Source | Definition tier | Participants | Implied year | Weight |

|---|---|---|---|---|

| Metaculus — Weakly General AI | T1 | 1,723 forecasters | 2028.3 | 23% |

| Manifold — AGI When? (Turing Test) | T1 | 1,071 traders | 2031.8 | 15% |

| Metaculus — Date of AGI | T2 | 1,846 forecasters | 2032.5 | 25% |

| Manifold — Cumulative "AGI before X" | T2 | 1,297 traders (pooled) | 2032.2 | 18% |

| Kalshi — Any company announces AGI | T2 | ~$37k orderbook | 2028.2 | 13% |

| Kalshi — OpenAI announces AGI | T2 | ~$19k orderbook | 2028.6 | 6% |

Methodology

How we compute the number.

Open each section for the detail. Everything stated ex ante so it can be falsified ex post.

Three operational tiers of AGI

The index reports three tiers because "AGI" has no universally accepted definition. T1 Weak AGI follows the Metaculus operational definition: an AI system that passes a 2-hour adversarial Turing test, scores above the 90th percentile on SAT/GRE/Winograd, and can assemble a physical model car from instructions. T2 Economic AGI requires automating at least 50% of human economic labor, aligned with OpenAI's working definition. T3 Transformative AI requires driving greater than 10% global GDP growth for a full year, the threshold used by Open Philanthropy and the existential-risk research community.

The headline number tracks T1 because it is the most liquid and most specifically operationalized definition. T2 and T3 are reported as shoulder bands so readers who disagree with the T1 framing can read across.

Composite formula

Each data source produces an implied median AGI year. The composite is a weighted mean: DAI(t) = Σᵢ wᵢ · yᵢ(t), where weights are determined by participant count (forecasters, traders, or orderbook liquidity for Kalshi markets). Sources with fewer than 50 participants or insufficient volume are excluded. As of v0.3: Market is live (Metaculus, Manifold, Kalshi), Capability is live via weekly leaderboard updates (ARC-AGI-2, MMLU-Pro, GPQA Diamond, SWE-bench Verified, LMArena Elo), Expert decays off the AI Impacts 2024 survey, and Compute / Narrative activate in later versions.

Velocity — acceleration toward AGI

Velocity expresses how many years closer the estimated AGI date has moved, per year of real time. Formally: V(t) = −[DAI(t) − DAI(t − 1yr)] / 1yr. A value of +1.0 yrs/yr means the timeline is pulling in at the rate time is passing, status quo. A value above 1.0 indicates the world is getting closer to AGI faster than real time. A value below 1.0 indicates deceleration. The current 3-year trailing velocity is +1.8 yrs/yr, driven primarily by the Metaculus Weak AGI median moving from roughly 2042 to 2028.2 since early 2022. v1 will switch to 90-day rolling velocity as the preferred headline.

What the index is not

The Dantian AGI Index is not a forecast by Dantian. It is a measurement of what prediction markets and expert forecasters currently imply. It is not a recommendation to bet on any market. It is not a claim that AGI has a well-defined arrival date, the band is the point. If Metaculus or another underlying source revises its operational definition, the index version is bumped (v0 to v1 to v2) and prior values remain archived rather than silently redefined.

What would prove this approach wrong

The methodology is stated ex ante so it can be falsified ex post. The index approach would be wrong if any of the following hold over a 24-month observation window:

- Late-lag. Liquidity-weighted prediction markets systematically lag a clearly verified AGI arrival by more than 18 months. The markets-as-measurement premise would fail.

- Tier collapse. The three tier definitions (T1 Weak, T2 Economic, T3 Transformative) fail to track distinguishable real-world events. If they converge onto the same date, the tiered framing adds no information.

- Capability-timeline decoupling. Capability-benchmark saturation correlates weakly or negatively with timeline pull-in. The Capability component (25 percent weight) assumes these move together.

- Market fracture. Two of the three market sources (Metaculus, Manifold, Kalshi) disagree by more than 5 years for an extended period without converging. Aggregation would be measuring noise rather than signal.

Each failure mode triggers a public methodology version bump with the old values archived intact. The index is a research artifact, not a truth claim.

Versioning and transparency

This is methodology version v0.3. Weights, sources, and formulas are fixed until a published version bump. v0.1 introduced Metaculus + Manifold as the T1/T2 composite. v0.2 added the Metaculus CP manual-fallback pipeline when the API tightened access. v0.3 (current) added Kalshi as a third market source and activated the Capability component via public benchmark leaderboards. Source data ships as a live JSON feed at /agi-index/data.json under CC BY 4.0.

Further reading

What people are predicting.

The Dantian AGI Index measures when. These essays ask what after. A curated reading list spanning optimistic, cautionary, and far-future viewpoints. The index stays neutral. The reading list does not.

Scenario forecasts

AI 2027 — Daniel Kokotajlo, Scott Alexander, Thomas Larsen, Eli Lifland, Romeo Dean. A month-by-month scenario forecast of superhuman AI by 2027, with two possible endings: slowdown or race. Grounded in current capability trends, with agentic research-automation loops as the pivot point.

Situational Awareness: The Decade Ahead — Leopold Aschenbrenner, 2024. Former OpenAI researcher lays out the case that AGI by 2027 is the default trajectory if compute and algorithmic progress continue at current rates. Long-form, technical, treated as canonical by many forecasters.

Optimistic framings

Machines of Loving Grace — Dario Amodei (Anthropic CEO), October 2024. The case that powerful AI could compress fifty to a hundred years of biology and mental-health progress into five to ten years post-AGI. Deliberately counterweighted to the x-risk discourse without denying the risk.

The Most Important Century — Holden Karnofsky (Open Philanthropy), 2021. The argument that the 21st century may be uniquely decisive in human history because of AI. The series where the Transformative AI threshold used as T3 in the index originates.

Cautionary and x-risk

Uncontained AGI Would Replace Humanity — AI Frontiers. The case that an AGI without robust containment becomes the default successor to humanity rather than a tool. Takes the position that the containment problem is load-bearing. The rest follows.

MIRI (Machine Intelligence Research Institute) — Long-running research program on AI alignment and existential risk. Yudkowsky, Soares, and others. Treats AGI as a problem where the default trajectory ends badly unless alignment is solved before capability outruns it.

What comes after

Life After AGI: Emergence and the Human Future — deepfa.ir. A more speculative, philosophical look at what remains distinctly human once cognitive labor no longer is. Treats emergence, not intelligence, as the interesting variable.

Epoch AI — Empirical research on compute trends, capability scaling, and AI economics. Where the Capability component of this index will eventually read from directly. Good first stop for anyone who wants data rather than argument.

Missing something important? Send the link via LinkedIn. The list skews toward pieces that make testable claims or concrete timelines. Polemics without predictions don't earn a spot.